Agent memory is push, not pull

Why every memory layer for AI coding agents is silently broken, and what it took to fix it.

There’s a specific failure mode I keep watching, and I bet you’ve watched it too.

You’re working with Claude Code, or Cursor, or Windsurf, on a real codebase, and the agent makes the same mistake it made last week. Not a similar mistake. The same one. The same wrong import, the same misread of your error-wrapping convention, the same naive retry on a non-transient 401. You correct it again. You explain again. You move on, because the work has to ship, and you tell yourself the next session will be different.

It won’t be. Because your agent has no memory, and the memory tool you bolted on isn’t doing what you think it’s doing.

That’s the problem I built Mnemos to solve. Not “an agent memory layer,” because the field already has those, and most of them are silently broken in a way that took me longer to diagnose than I’d like to admit.

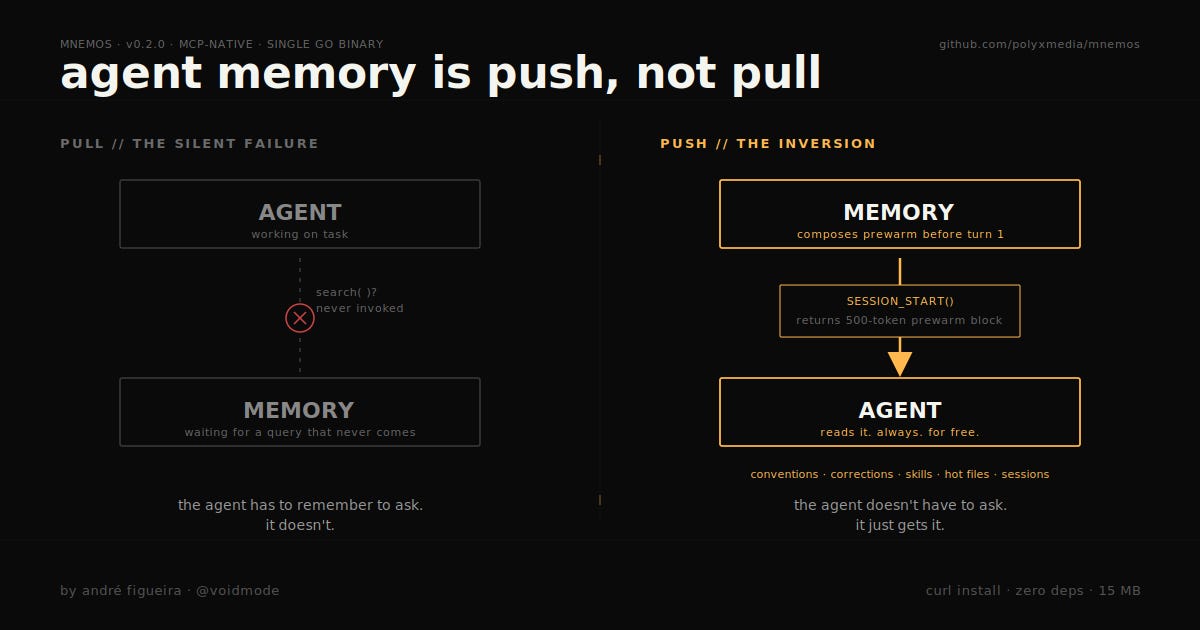

The pull assumption that nobody questions

Every memory tool I’ve evaluated, including the ones with tens of thousands of GitHub stars, ships with the same architectural assumption: the LLM will call your memory tool when it needs a memory.

It won’t.

It really, really won’t. Not reliably, not at the right moment, not with the right query. Frontier models are getting better at tool use, but “remember to consult your long-term memory before answering” is exactly the kind of housekeeping prompt that loses the fight against any concrete user instruction sitting next to it in context. The agent is focused on the task. Your memory tool is a peripheral. Peripherals get skipped.

This is a pull-based design. The memory layer sits there politely, exposes a search endpoint, and waits for the agent to ask. Mem0 does this. Zep does this. The thirty other startup memory layers all do this. Then they ship benchmarks where retrieval works great because the harness explicitly calls retrieval, and call it a day.

But the production failure mode isn’t “retrieval returns the wrong document.” The production failure mode is “retrieval was never invoked.” You can’t measure that on LongMemEval. You can only measure it by sitting at your terminal and watching your coding agent re-litigate decisions you’ve already made.

The inversion

Mnemos starts from the opposite assumption: the agent will not call your memory tool, so you have to push memory at the one moment the agent is guaranteed to look.

That moment is session start.

When an agent calls mnemos_session_start(project="my-repo", goal="fix the login bug"), it does not get back a session ID. It gets back a pre-warmed context block, around 500 tokens, composed from five sources:

Conventions you’ve declared for this project (”we use

fmt.Errorfwith%w, here’s why”)Summaries of your three most recent sessions on this project

The top three skills (procedural memory) matching the goal

Corrections from the journal whose trigger context matches the goal

The hot-file list, sorted by how often the agent has touched files on this project

That block is composed before the agent does anything. The agent doesn’t have to be smart enough to ask. The agent doesn’t have to remember to ask. The agent reads the prewarm because it’s already in context, and from that moment the project’s institutional memory is loaded.

This is not a small change. This is the entire ballgame.

Memory of mistakes is more valuable than memory of successes

The second insight is one I had to live through to believe.

Most memory layers store the conversation, then trust retrieval to surface the right slice when needed. Some get fancy and extract “facts” or “preferences” and store those instead. None of them, to my knowledge, treat failure as a first-class object.

In Mnemos, when the agent screws up and you correct it, the agent is supposed to call mnemos_correct with four required fields:

tried: what was attemptedwrong_because: why that was wrongfix: what workedtrigger_context: the situation that made it relevant

That’s it. Four fields, mandatory schema, stored as a typed correction observation with a retrieval-weight bonus. Next session on the same project, when the agent calls mnemos_session_start with a goal that matches, the correction surfaces in the prewarm, before the agent has a chance to make the same mistake again.

The failure modes of an LLM are far more compressible than its successes. Successes are diverse and contextual. Mistakes cluster. Most agent screw-ups on a given codebase fall into a handful of recurring categories: misreading a convention, missing a constraint, misunderstanding the data model, applying a pattern from another stack. If you record those failures with structure instead of dumping the whole transcript, you don’t need a vector database to retrieve them well. You need exactly enough metadata to match a goal to a previous mistake.

This compounds across weeks in a way that ordinary memory does not. Each correction takes maybe ten seconds for the agent to record, and saves an unknown but substantial amount of future debugging.

Compaction recovery, or: the moment everyone else fails

Here’s a primitive I haven’t seen in any other memory tool, and I’d love to be told I’m wrong about that.

Modern coding agents compact their context. Claude Code does it, Cursor does it, every long-running agent loop does it eventually, because context windows are finite and conversations are not. Compaction is essentially a summarization pass: the agent replaces a large block of prior conversation with a compressed version, frees up tokens, keeps going.

The problem is that compaction is lossy. The agent post-compaction often does not know what it was working on five minutes ago, what it had decided, what it had ruled out, or what the user wanted. You’ve watched this happen. The agent suddenly asks you a question whose answer is the entire prior twenty minutes of work.

Mnemos has a dedicated API for this exact moment: call mnemos_context with mode: "recovery", pass the session ID and goal, and you get back the current session’s goal, the in-session observations, the conventions, and a reconstructed picture of what the agent was doing. It’s the “oh god, context just got compacted” button. Drop it into your agent’s recovery hook (Claude Code has a hook for this) and compaction stops being a story-killing event.

I have not found this in Mem0, in Zep, in MemPalace, or in any of the other memory layers I’ve reviewed. If it’s in there and I missed it, please file an issue.

Memory stores are an injection vector and we should treat them like one

When you give an LLM persistent memory, you’ve given an attacker (or just a careless teammate) a way to write content that will be silently injected into future LLM contexts on a different machine, possibly belonging to a different user, with no review step in between.

This is a new attack surface and the field is largely ignoring it.

Mnemos runs every prewarm and recovery block through a safety.Scanner at the injection boundary. The scanner looks for instruction-override phrases, role-spoofing patterns, fake tool syntax, zero-width unicode, and bidi overrides. High-risk content gets wrapped in a visible [MNEMOS: FLAGGED risk=high rules=...] banner so the agent can see it and the user can see it. Low-risk content gets sanitized silently (zero-width and control chars stripped).

This is not a complete solution to memory-borne prompt injection. It is the difference between treating memory as trusted input and treating it like any other piece of untrusted data crossing a trust boundary. If you’re building on agent memory and you don’t have something equivalent in your stack, you have a problem you haven’t seen yet.

Bi-temporal truth, or: how to stop poisoning your own context

Most memory tools delete or overwrite. Mnemos does neither.

Every observation has two timelines: fact time (valid_from, valid_until, when the fact was true in the world) and system time (created_at, invalidated_at, when the system knew about it). When a fact changes, the old observation is marked invalid, not removed. Default searches don’t surface invalid observations, but historical queries (as_of: "2026-02-01") do, and the new fact is linked to the old one with a supersedes edge.

This sounds like academic database hygiene until you’ve watched an LLM confidently regurgitate a deprecated convention because it was in the memory store and no one ever pruned it. The default-live, optional-historical model is the right one. It’s also exactly how Datomic, XTDB, and serious financial systems handle the same problem. Borrowing the design was free; the alternative is context poisoning.

How it stacks up against what’s out there

Public docs as of April 2026. If anything below is wrong, the GitHub issues are open.

Mnemos Mem0 Zep MemPalace Language and runtime Go, single binary Python service Go server plus Postgres or Neo4j Python plus Chroma MCP-native Yes Via bridge Via bridge Yes Push-based session prewarm Yes No No No Compaction recovery Yes No No No Structured correction journal Yes (typed schema) No No No Promptware scanner at injection boundary Yes No No No Bi-temporal model Yes Temporal extraction Yes (Graphiti) Validity windows Hybrid retrieval BM25 plus vectors (RRF) Vectors plus LLM rerank Hybrid graph plus vectors Vectors Local-first (zero external deps) Yes No (SaaS primary) Yes (self-host) Yes Auto-enables Ollama if present Yes No No No

What others do better: Mem0 has the largest community by far, and a mature integrations library. Zep’s Graphiti has a far more sophisticated knowledge graph with entity extraction. MemPalace mines verbatim conversations, which Mnemos deliberately does not. None of these are “wrong” choices, they’re just different design points.

Mnemos’s bet is that for AI coding agents specifically, the right design is curated, push-based, structurally typed, local-first, and zero-infrastructure. If that bet is right, the field has been overcomplicating this for two years.

The rumination loop, or: Popper inside the memory layer

This one I’ll put a spotlight on because it’s where Mnemos goes from “memory layer” to “epistemic infrastructure.”

Skills (procedural memory) are versioned. Each skill has a success and use count, which gives an effectiveness ratio. When the dream consolidation pass runs, it scans for skills whose effectiveness is dropping, or whose recent failures contradict the rule the skill encodes, and it adds those to a rumination queue.

When the agent picks up a candidate from the queue, it doesn’t just rewrite the skill. It has to:

State the hypothesis verbatim

Read the disconfirming evidence the system has collected

Steel-man the original rule before changing it

Identify the fatal flaw, distinguish a real falsification from noise, name any context shift

Propose a revision

And here’s the part that matters: when the agent calls mnemos_ruminate_resolve, it must pass a why_better field, minimum sixteen characters, that names a concrete new prediction the revised skill makes that the old one did not. The resolve endpoint rejects cosmetic rewordings.

This is Popper’s falsifiability criterion enforced at the API boundary. The agent cannot “improve” a skill by making it vaguer or by rewriting it without committing to a new testable claim. The store will not let it.

I am not aware of any other memory layer that has a mechanism resembling this. The closest analog is human research practice: when you revise a hypothesis, you owe the world a new prediction. If you don’t, you’re not learning, you’re rationalizing.

Why Go, why one binary, why no vector DB

Practical answers, briefly.

Go because it cross-compiles to a single 15 MB static binary for Linux, macOS, and Windows on amd64 and arm64, with zero CGO. There is no Docker on the install path. There is no Python runtime on the install path. There is no Postgres, no Neo4j, no Pinecone, no Weaviate, no Chroma, no Qdrant. The install command is one curl. The dependency footprint is the binary, a SQLite file in your home directory, and optionally Ollama if you want vector search to auto-enable.

SQLite with FTS5 because BM25 ranking on FTS5 is, on most agent memory queries, indistinguishable in quality from vector retrieval, at orders of magnitude lower latency and zero infrastructure cost. When Ollama is detected on the host, vectors auto-enable, get stored as BLOBs in the same SQLite file, and feed into a hybrid ranker (BM25 plus cosine via Reciprocal Rank Fusion). When Ollama is not present, the system falls back to pure FTS5 silently. There is no configuration step.

This is what local-first AI infrastructure should look like. The fact that almost nobody else is shipping it this way is, I think, mostly a function of teams reaching for Python because Python is what they know.

What this unlocks, in practice

After a week of using Mnemos with Claude Code on a real Go codebase, the agent stops re-asking about my error-wrapping convention. After two weeks, it stops repeating the OAuth retry mistake. After a month, the correction journal has compounded into a project-specific intuition that survives across sessions, across context compactions, and across model upgrades.

The agent gets more useful per token. Token budgets get tighter, not looser, because the prewarm is curated to ~500 tokens and replaces hundreds or thousands of tokens of repeated re-explanation. That is the actually-real productivity win, and it’s measurable in your monthly bill.

You get session replay (mnemos replay <session_id>), which generates a markdown recap of a past session enriched with everything you’ve learned since. Paste it back into your agent and ask “what would you do differently now?” That is a retrospective-self-improvement loop nobody else is shipping.

You get portable skill packs. Export any skill (or all of them) as a JSON pack, share via file or URL, and mnemos skill import https://... installs it in one command. Procedural memory becomes a social layer.

You get an Obsidian vault export, so the entire memory store renders as a markdown graph with wikilinks, browseable in any editor.

Try it

curl -fsSL https://raw.githubusercontent.com/polyxmedia/mnemos/main/scripts/install.sh | bash

mnemos init

# restart your agent. that's the install.

mnemos init auto-wires Claude Code, Claude Desktop, Cursor, Windsurf, and OpenAI Codex CLI. Anything else that speaks MCP over stdio works too, point it at mnemos serve.

The repo is polyxmedia/mnemos. MIT licensed. Single binary. No telemetry. Issues open.

If you’ve been quietly suspicious that your agent memory layer wasn’t actually doing anything, you were probably right. Try this one for a week and see whether the agent stops repeating itself.

That’s the whole pitch.

Mnemos is built by André Figueira at Polyxmedia. If it helps you ship, a star is the fastest way to say so.